Rethinking Machine Learning: The Role of Similarity

Dot Products, Decision Rules, and Distance Functions

To deconstruct of machine learning, I first looked at machine learning models as a series of feature transformations.

Then I did a workout. Between pull-ups and practicing handstands, I had a realization. There are two types of operations within trained machine learning models: Similarity operations and transformations. Fortunately, I had a notebook with me, and between exercises, I frantically wrote notes. The danger with such euphoric ideas is that one can get into a rabbit hole without feedback, and the ideas can turn out to be weird and wrong. That’s why I want to surface the ideas here in this post.

Here it is: A learned model needs to “store” the learned patterns. Not storing as in how you store your photo albums in the basement, but storing the patterns in an operational, usable way. This is done through similarity operations. Together with transformations, these two operations are the building blocks of machine learning models (the assumption throughout this post is that the model is already trained):

Similarity operations: Quantify a data point’s similarity to learned patterns.

Transformations: Transforming the features of a data point.

In this post, I talk about the similarity operations.

Similarity operations

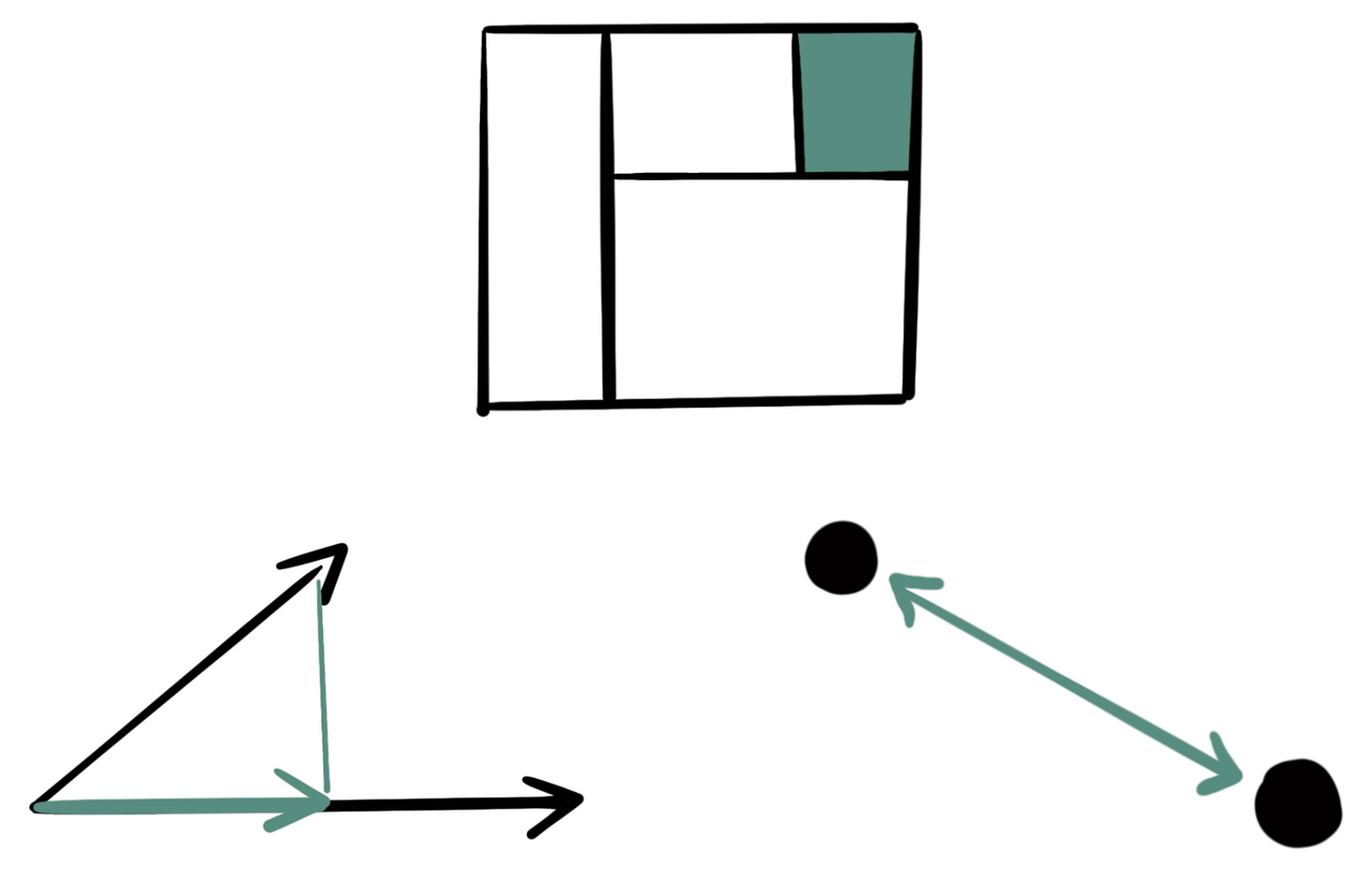

At the core of a learned model, we have similarity operations: These are mathematical procedures that compare a data point with a learned pattern. I came up with 3 essential similarity operations: the dot product, the decision rule, and the distance function.

Dot product: Multiplying two vectors with each other. The dot product is a function of the angle between the two vectors and their magnitudes. The more aligned the direction of two vectors, the greater the dot product and it’s zero if the two vectors are perpendicular. That allows an interpretation of the dot product as a measure of similarity in direction, especially when we normalize one of the vectors.

\( \mathbf{x} \cdot \mathbf{b} = x_1 b_1 + \ldots + x_p b_p \)The linear model, for example, is the dot product between features and weight vector. The dot product is also prevalent in neural networks, think of the attention layer in transformers where the query is the query vector multiplied with each word vector.

Decision rule: A series of if-else conditions bundled together with a prediction. A decision rule is binary: It may or may not apply to a data point. This makes it a crude binary measure of similarity: If the conditions apply, then the data point is “similar” to the pattern implied by the rule. If the conditions don’t apply it’s not similar. Two data points can be seen as similar when the same rules apply. Here is an example of the conditions of a rule:

\( \mathbb{I}\left[(x_1 > \theta_1) \land (x_2 \leq \theta_2) \land (x_3 = \theta_3)\right] \)The decision rule is dominant in tabular data and fuels all tree-based models like Random Forests and xgboost.

Distance function: The distance function measures how far away two data points are from each other. The smaller the distance the more similar two data points are. A typical example is the Euclidian distance function:

\(d(\mathbf{x}, \mathbf{z}) = \sqrt{\sum_{i=1}^p (x_i - z_i)^2} \)Distance functions are common in unsupervised learning like clustering and anomaly detection. Kernel density estimation is based on distance functions. Also, the radial basis function kernel used in SVMs is based on a distance function.

It’s no coincidence that the first three ML models you learn about in introduction courses on machine learning each represent one of the similarity operations:

The linear regression model is a dot product.

A decision tree is a collection of decision rules.

k-nearest neighbors are the simplest way to turn distance functions into a model.

For more complex models, we have to talk about transformations, like max-pooling in CNNs to distill images to vector-form or non-linear transformations to allow more complex operations (e.g. in fully connected NNs) or to match the target space (e.g. logistic regression). But going deeper into the transformations part is something for another post.

These thoughts are all part of my latest book project Reconstructing Machine Learning. Any feedback is appreciated. Whether you think that "ML model = similarity + transformation" is a flawed idea or you can think of other essential similarity operations, I would love to hear about it!

I'm not sure I see the distinction between dot product and distance? Isn't the dot product just the unscaled angular separation of the two vectors? I think if we do scale it, the angle between the two vectors will be a distance measure (symmetric, has a zero, obeys the triangle inequality)?

Fascinating idea! What would be the statistical analogue of the cross product? Maybe PCA.