A new chapter on generalization

When the pandemic hit, some machine learning research labs dropped their projects to work on COVID-19 classification models, many of them based on X-ray images.

Unfortunately, almost all of these models are useless.

A systematic review by Wynants et. al 2020 showed that only 2 of the reviewed 232 prediction models were promising. Ouch.

The remaining 230 had various problems, like non-representative selections of control patients, excluding patients with no event, risk of overfitting, unclear reporting, and lack of descriptions of the target population and care setting.

Problems like bias and overfitting are ultimately problems with generalization. But probably many of these models looked good on paper, meaning they might have a low test error. Because that’s how we do generalization in machine learning, right?

Unfortunately, a low error on unseen data that is identically and independently distributed, is merely the start. It represents the narrowest view of generalization.

In practice, data are not IID, training setups don’t perfectly translate to the application, and it’s difficult to get representative samples.

A new chapter on generalization

In February, Timo Freiesleben and I published an in-progress version of our book Supervised Machine Learning for Science. It’s free to read for everyone. And we just published the chapter on generalization.

Take a deep dive into generalization:

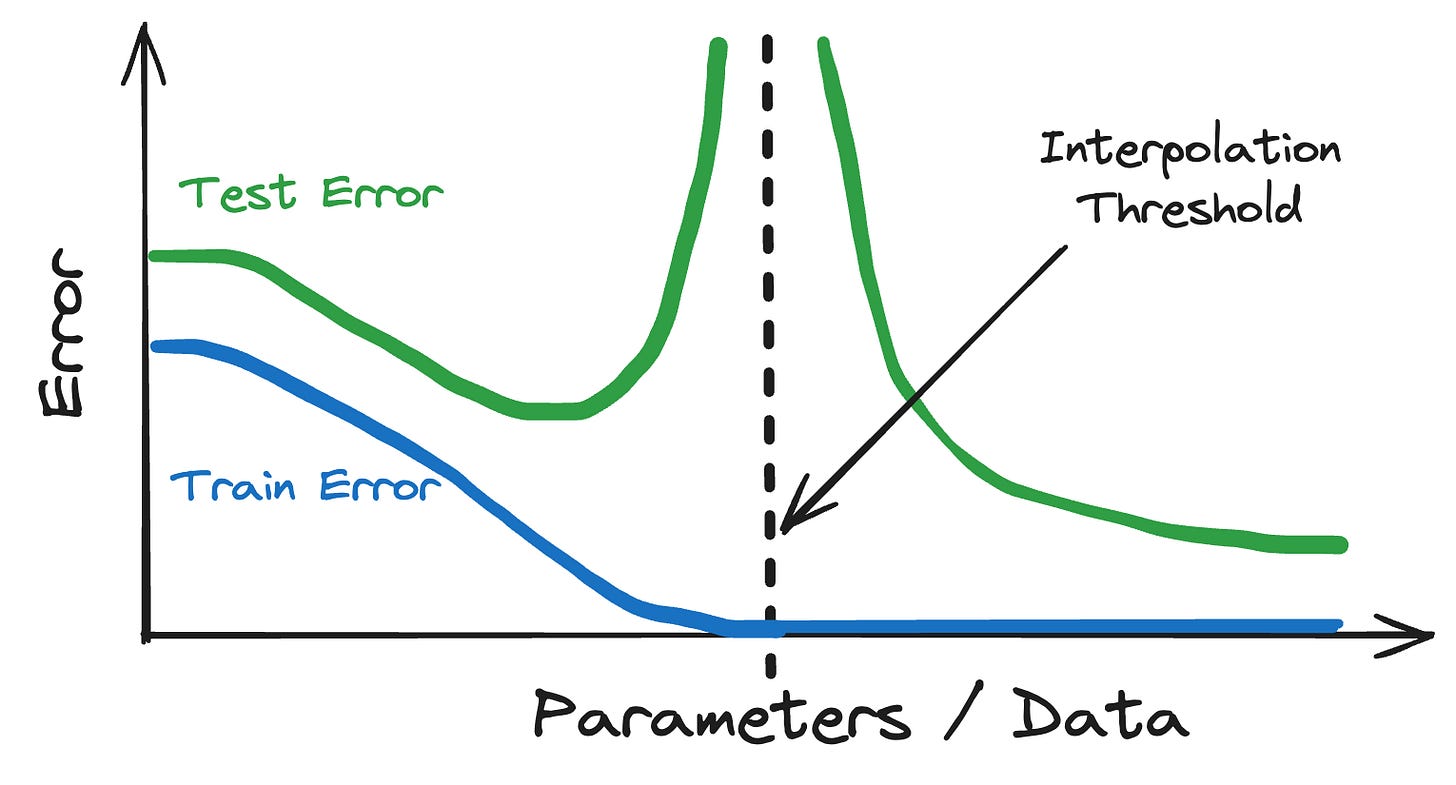

The chapter starts with statistical learning theory, the base for generalization in machine learning with topics like underfitting, overfitting, and double descent.

But the theory is just the start because the real world is messy and generalization in practice requires making more assumptions and understanding the data-generating process.

And there’s a different beast of generalization that is rarely talked about directly, but often indirectly: Generalization of insights.

A theme that we already talked about in the interpretability chapter, but developed deeper here. Generalization of model findings requires you to transfer insights from your model to the phenomenon. From a sample to a larger population. It can be tricky to pull off.

In the end, generalization is never for free, unfortunately. You know, no free lunch, and no free dessert either.

Enjoy the read! And if you have any feedback, we are happy to hear it.