Machine learning never cheats but it may play flawed games

How to become better at designing prediction games

Streamer Rainbolt has become famous for his exceptional skill in GeoGuessr, a game where players guess locations from Google street-view images. He has even become a meme for his ability to identify "secret" places. When asked how he identifies countries from images, he mentions features like poles, bollards, and license plates. But there are also street-view-specific clues like camera artifacts from the street-view car that help identify Albania. Such game-specific clues obviously wouldn’t apply to the real world. But since it all happens in the context of GeoGuessr, all clues Rainbolt uses are fair game.

Let’s switch the focus and say we train a machine learning model that can geotag photos and is trained with images from Google Street View. In this case, when the model relies on such shortcuts as identifying Albania from camera quirks, it seems like the model is cheating or taking shortcuts.

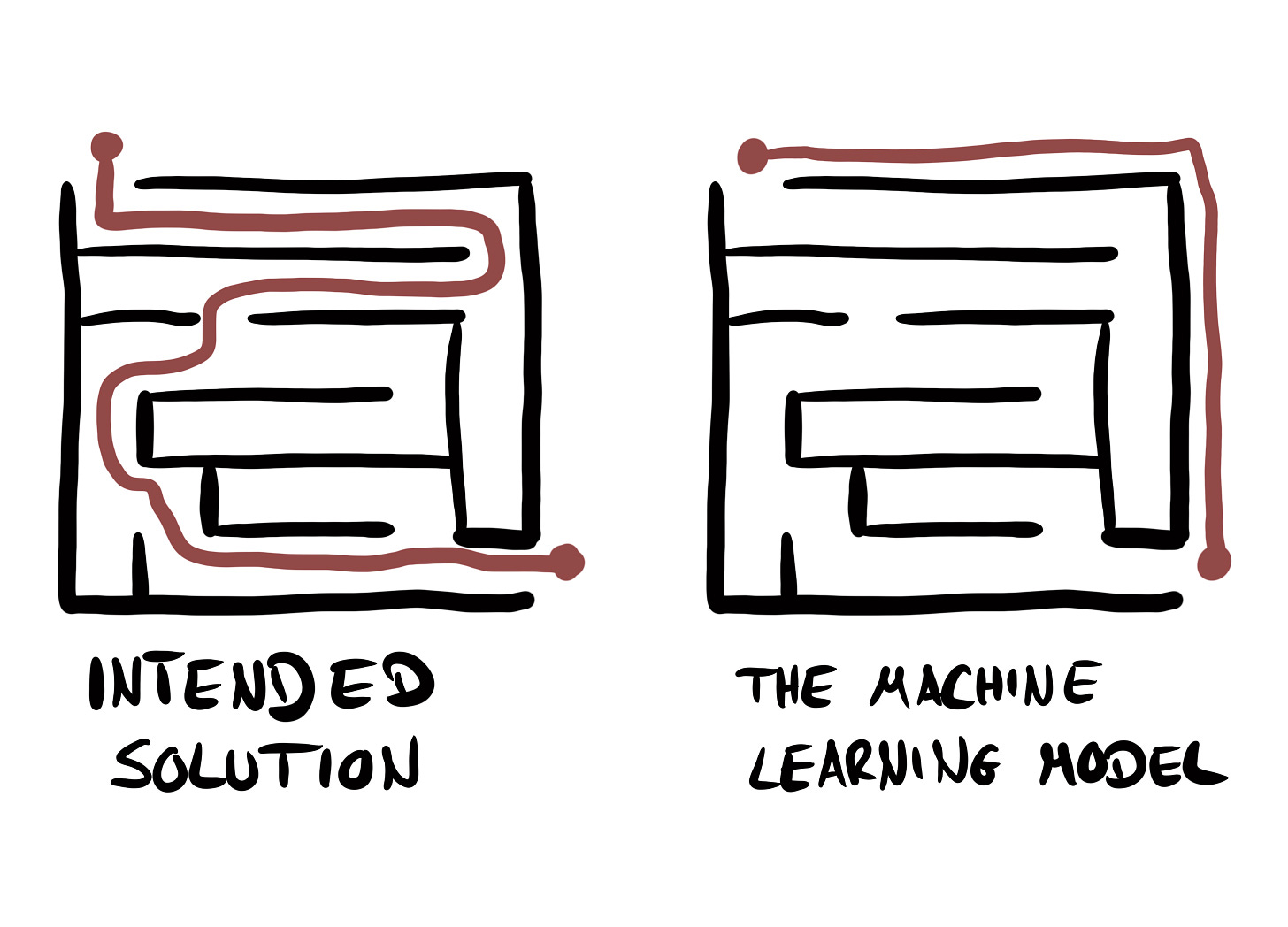

But here’s the thing: It’s not only Rainbolt playing a game. Also, the ML algorithm is playing a game. While it may seem like a model is cheating by exploiting quirks in the data that are not intended, I find it much more useful to think of ML as always playing by the rules. And if the ML algorithm finds shortcuts, it’s because the game allows it.

However, machine learning doesn’t feel like designing a game. And that’s because most of the game rules are implicitly encoded by how the data were generated. The over-arching rule for GeoGuessr is that you can use any information in the image to guess the location. Whatever information is in the images dictates how easy or hard the task is. The trouble is, that identifying these “rules” is difficult. It’s a bit of a chicken-and-egg-problem, since if we knew all the rules of the prediction game, we wouldn’t need machine learning in the first place. However, we can still try to detect many flaws in the data.

Detecting flaws in prediction games

Caruana et. al. (2015) developed an interpretable model to predict the probability of death for patients who showed up at the ER with pneumonia. The goal: Identify high-risk patients that need to be admitted to the hospital. One of the rules the model identified was that asthma patients had a reduced risk of death. Odd, since the opposite is true: patients with asthma are at higher risk of pneumonia. There is a simple explanation for why the model learned that asthma means a lower risk of death: Patients with asthma tend to get more aggressive treatments for pneumonia. A treatment that is highly effective against death from infection. Hence asthma is a good predictor of a lower probability of death for this particular prediction tasi.

Here’s how Caruana et. al describes a possible solution to the problem:

We can “repair” the model by eliminating this term (effectively setting the weight on this graph to zero), or by using human expertise to redraw the graph so that the risk score for asthma=1 is positive, not negative. Because asthma is boolean, it is not necessary to use a graph, and we could present a weight and offset (RiskScore = w * hasAsthma + b) instead.

(Note: “graph” here refers to the effect plot that shows the feature on the x-axis and the contribution to the prediction on the y-axis)

The framing is that the model is broken and needs fixing. My point is that the model isn’t broken, but the game has implicit rules that conflict with the intended use case of the model. Their fix carries the risk that features correlated with asthma are still in the model and give a lower predicted probability for asthma. In 2017, Amazon scraped their ML hiring algorithm because it was biased towards men and they couldn’t get it fixed by removing the gender attribute — the model used other means to identify women, such as all-women colleges or mentions of women’s sports clubs.

The pneumonia model problems run deeper than the problem with the asthma feature. The general game design flaw is that variables used for prediction also influence the treatment. The outcome, however, is influenced by the patient’s initial health status and treatment. Any implicit information that is in the patient’s health status about the treatment gets mixed up with these features. But the goal was to use the model to decide on a treatment, which would be an extremely bad idea. It’s fair to assume that there might be a lot more variables that influence the treatment and will make the model a worse model for the intended use of treatment decisions.

Prediction games are a function of the data-generating process

What we typically think of when designing a machine learning task is the choice of task (e.g. survival, regression, classification), choice of loss and evaluation function, how to split data, which features to use, and so on.

But these tools are focused on training which allows only limited control over the prediction game. One profession that is uniquely equipped to design prediction games is statisticians. I may be biased here since my background is in statistics, but the focus on understanding and shaping the data-generating process is one of the foundations of statistics. The data-generating process ultimately shapes what the prediction game is all about.

Here are just a few tools and lenses that can help you understand and shape a prediction game:

Sampling theory

Design of experiments

Missing data analysis

Causal inference

Interpretable models

In my book Mindful Modeler, I advertise drawing from multiple modeling approaches when modeling data. For example, learning to think like a statistician and being able to build causality into your models can greatly enhance prediction models that are built in the spirit of supervised machine learning.

Thanks for sharing this! I especially like the graphic.

True is true when boolean == 1;