Machine Learning's Secret Sauce: Competition

What machine learning has to do with marathons, the Fosbury Flop, and running a sub-4-minute mile.

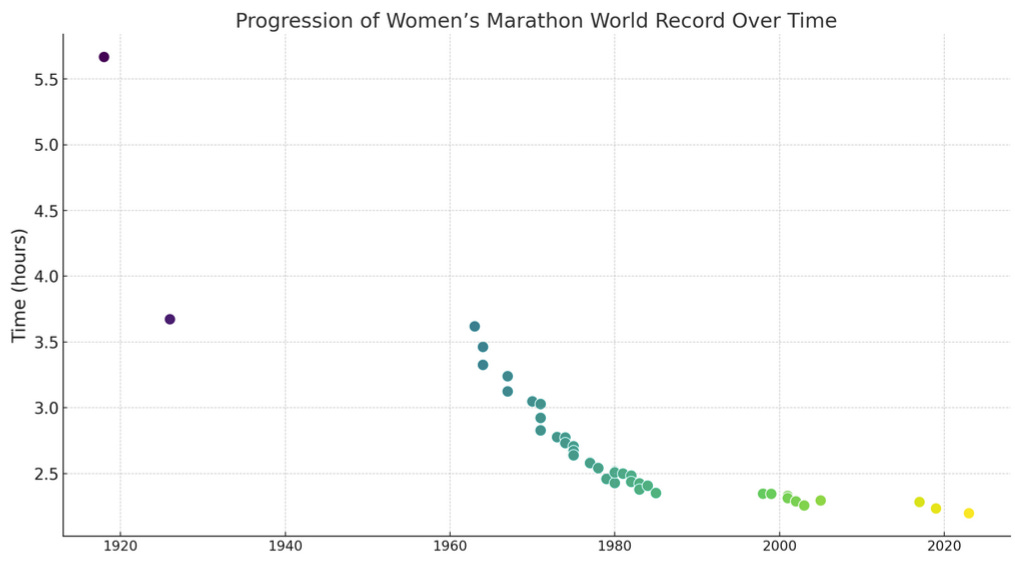

Humans have consistently lowered marathon records over the last 100 years. This trend is evident in both men's and women's records:

100 years of running — a period too short for evolution to explain this progress. What's behind it? There are several tangible reasons: better shoes, improved nutrition, and more inclusive marathons, to name a few. Yet, there's a meta reason that underpins these improvements.

Competition.

Competition is a catalyst for progress.

For machine learning, competition is also a main driver of progress. Especially in supervised and self-supervised learning, competition manifests on multiple levels:

Hyperparameter Tuning is a competition between hyperparameters

Model Selection is a competition between different models.

Benchmarks are a competition between new and old ML algorithms.

ML challenges are competitions between modelers.

Let’s see what we can learn from sports about machine learning through the angle of competition.

Competition fosters jumps

At school, we had to learn how to high jump. Specifically with a technique called the Fosbury Flop where you run towards the bar in a curve, then jump over it backward with your back arched. I accidentally turned it into a “Fosbury Forearm Drop” and managed to break the bar into two. 😅

I cursed his name for a time, but today I admire David Fosbury, the inventor of the Fosbury Flop. Before he invented the technique, jumpers used many different techniques to get over the bar. But today everyone’s using the Fosbury Flop.

In machine learning, we also have our Fosbury Flops. They are called things like “Transformers” or “gradient-boosted trees”. Techniques that — through the filter of competitions — proved themselves over and over again and found wide adoption.

Progress may be a sum of small improvements

The reduction in marathon times over the years doesn't trace back to a single breakthrough that we can tell a cool story about. No Fosbury Flop. It's a cumulative effect of minor improvements. Similarly, in machine learning, while groundbreaking architectures occasionally emerge, much of the progress is incremental. It could be a slight improvement in an algorithm, removing some bad apples from the data, or more effective training methods.

I realized this during my participation in machine learning competitions. I often hoped for a breakthrough that would yeet me on top of the leaderboard. Most of the time, however, it was a series of small improvements that helped me slowly creep upwards.

Breaking the barrier

In 1954, a man called Roger Bannister was the first person to run a mile in under 4 minutes. There was some dispute around the record, but we shall not discuss it here.

After that many more runners managed to run a mile under 4 minutes. You could argue that the cumulative improvements moved the distribution of top runners upwards.

But there’s an additional psychological effect at play: breaking the barrier.

Seeing what’s possible can be a huge motivator for people. I experience a similar phenomenon in machine learning. Seeing others far ahead in a competition makes me realize that there's more to be discovered or optimized in models and data. It's a powerful motivator. Also observing the progress of LLMs, especially open-source LLMs has a breaking-the-barrier component: OpenAI with GPT 3.5 and GPT 4 broke the barrier and now more and more LLMs are following.

Beware the shortcuts

However, intense competition can also lead to undesirable practices. Doping is one for example, which is common in some sports like bodybuilding or cycling. In the context of marathons, some of the “improvements” stem from optimizing conditions of the benchmark itself, like choosing Berlin's flat terrain and mild climate, making it a preferred location for record-breaking attempts. We observe the same in machine learning. There may be shortcuts. For example, for LLMs, we have the problem that evaluations might be partially on training data. Or if a Kaggle competition has target leakage, then winning solutions will exploit it (if not explicitly forbidden).