The Infinite Data Hallucinator

How to create arbitrary, fictive datasets with a simple script using GPT-3

tl;dr I created a Jupyter notebook that produces toy data. Repo link at the end of the post.

This newsletter is a bit different. If you follow me on Twitter, you might have seen that I’m exploring GPT-3 as a writing and productivity tool.

But this time, I experimented with a different question: Can large language models create (reasonably) realistic-looking data?

Such a dataset hallucinator could be useful in certain scenarios:

Test out new machine learning models with various plausible datasets

Toy datasets for educational material (tired of Boston Housing & co, anyone?)

So I built the “Infinite Data Hallucinator”.

It took me less than 2 hours.

And it works surprisingly well, with the language model GPT-3 doing the heavy lifting.

Let’s dive in.

The Infinite Data Hallucinator

The Infinite Data Hallucinator is just a Jupyter notebook that calls the OpenAI API (for GPT-3) and turns the result into a pandas DataFrame. The central piece is the prompt.

The Prompt

It was surprisingly straightforward to come up with the prompt. I’ve used GPT-3 before and could rely on some intuition on how to desired results out of the language model. The trick is to write the prompt so that GPT-3 completes it with a string that can be converted into a CSV.

Here is the prompt:

%s

Size: n=%s rows

Number of features: %s

This dataset is perfect for education, because it has a mix of numerical and categorical features, there are no missing values, and interesting correlations structures. Column names are all lower case and without special characters. The dataset is very realistic.

Raw CSV file (first column is id, showing all n rows, just copy and paste everything between --- and ---):

---

Before the prompt is sent to the API, the %s are replaced, in this order, with the user-provided dataset name, number of rows, and number of features.

Pretty stupid, but it works. As you can imagine, some parts of the prompt were trial and error. For example at first GPT-3 would only return CSV files that are cut off with a “…”, so I added “showing all n rows, just copy and paste everything”.

From Strings To CSV

You’d imagine that a tedious step would be to get the GPT3 output reliably into a CSV format. Well, it hasn’t been a problem at all.

2 reasons: The output of GPT-3 produces coherent CSV syntax most of the time. And also pandas is very forgiving if there are problems. Sometimes when the token limit is reached, the CSV is just cut off in the middle, and pandas would just fill with NaNs.

Some examples

Enough technical details. Here are some examples. A sample of n=5 for some of the hallucinated datasets.

“Price Of Sushi” (n=30, p=4)

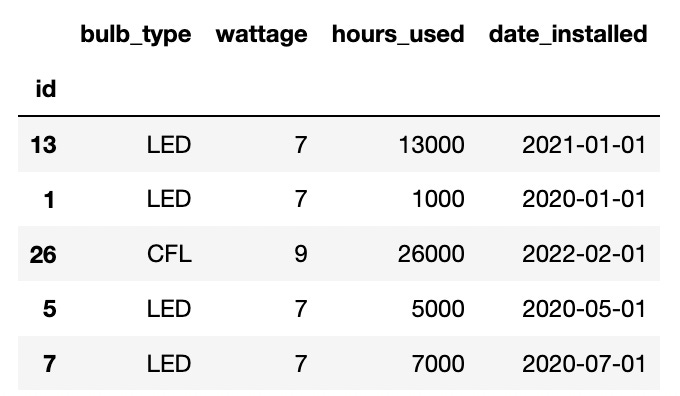

“Light Bulb Changes” (n=30, =4)

“Cough Classification” (n=30,p=9)

Superficially this datasets are rather coherent. But if you look closely, cracks start to show.

For example, in bulb data there was 1 bulb that was installed in 2023. That’s why I would call it “Hallucinator” and not “Generator”. The datasets don’t necessarily make complete sense, but they have superficial coherence.

What Now?

It was an interesting experiment and I think the result could be used for testing and toy examples.

You can easily adapt the prompt to create more specific datasets.

Let’s say you write a library for time series data and want to make sure the library is robust. First, you could add to the prompt that there should be a date column. Then you can add other requirements like having different date formats and so on.

The script has some serious limitations. For example, because of the token limit, it’s difficult to create large datasets, with a large number of rows and/or a large number of features.

Disclaimer: Don’t interpret the datasets as if they were real data. Someone on Twitter mentioned the danger of misusing such a tool for creating fake data for, for example, fraudulent academic publications. For this, the data aren’t coherent enough. Plus the hurdle for scientific fraud isn’t necessarily data (anyone can produce arbitrary data in Excel already).

You can find the script in this repository (OpenAI API key required)

Have fun experimenting with it.