Same Model, Different Uses

From Bayesian inference to supervised machine learning: while math and models can overlap, the modeling mindsets dictate use and interpretation of models

Is linear regression a model from statistics or machine learning?

I’ve heard this question more than once, but it starts from the false premise that models somehow exclusively belong to one approach or the other.

Instead, the question should be: What's the mindset of the modeler?

The Mindset Dictates The Interpretation

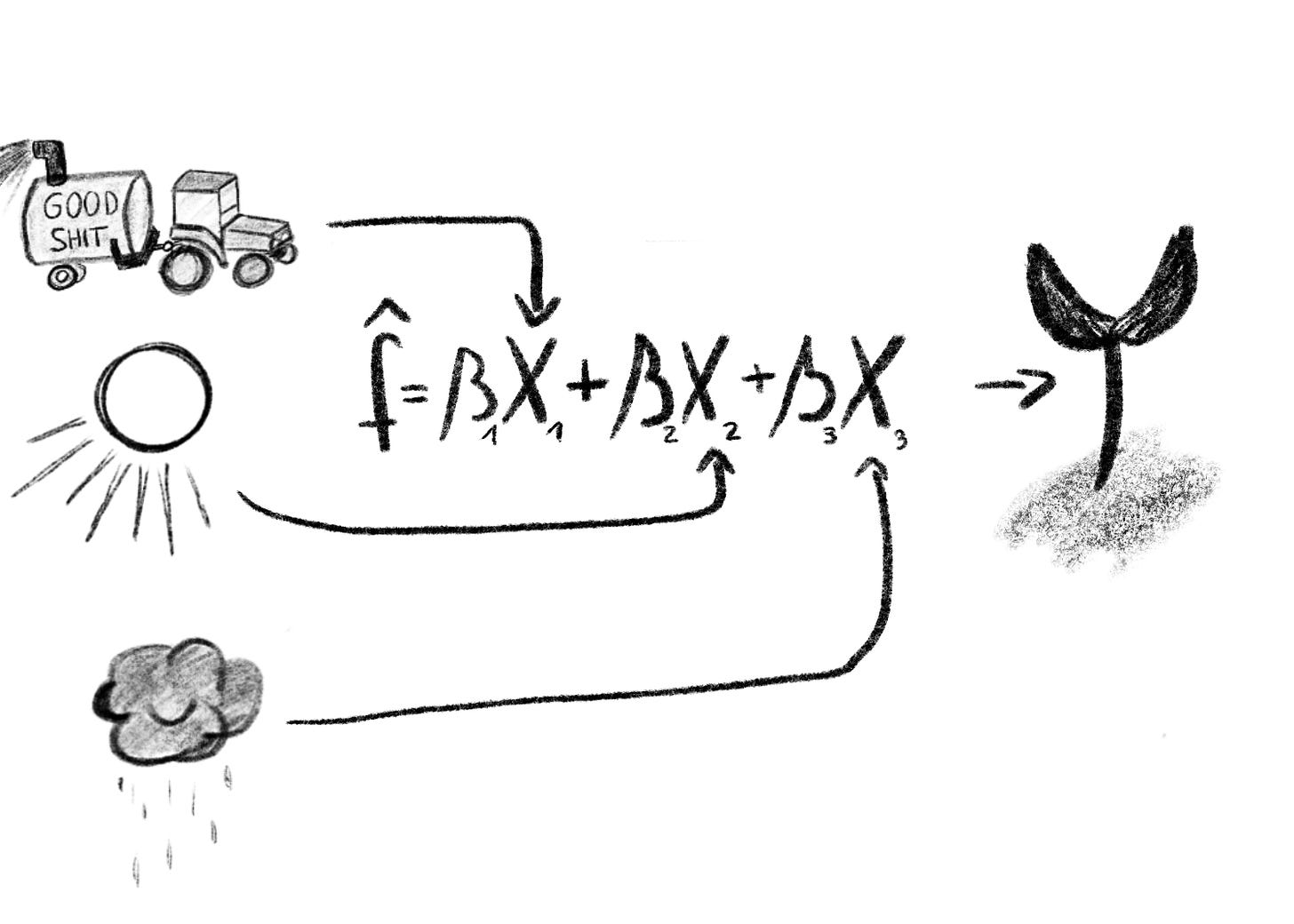

Imagine a researcher has data on rice harvests that include information about yield, fertilizer use, hours of sunshine, and amount of precipitation. The researcher gives the data to a data scientist to “do something with it”.

The data scientist produces a linear regression model that expresses how rice yield depends on fertilizer use, sunshine, and precipitation.

What can the researcher do with this model?

Without further information about the mindset behind the modeling process, we can’t answer that question.

Can the researcher use the model to accurately predict rice yields?

=> Only if the generalization error is low and evaluated properly.

May the researcher interpret the coefficient of fertilizer as causal to rice yield?

=> Only if the analysis is based on a causal model.

Can the researcher say whether the effect of fertilizer is significant, i.e. interpret the p-values?

=> Only if relying on a frequentist interpretation of probability and if model assumptions hold.

…

The mindset behind the model also influences how to interpret uncertainty, whether or not the model parameters are treated as random variables, whether to care about the distribution of the residuals and so on.

The Many Cultures Of Modeling

Let’s go through a few mindsets that could have produced the regression model.

Frequentist inference: The data scientist might have made assumptions about how the data are distributed, decided on a linear regression model, fitted the model, and diagnosed the model. Now the researcher may interpret the parameter estimates, including confidence intervals and hypothesis tests.

Or the data scientist is a Bayesian and assumed that the model parameters are also variables. So the modeler studies the posterior distributions of the coefficients.

Or the model came out of a “contest” between multiple models. The linear regression model just happened to be the most performative one, measured in a cross-validation setup as typical in supervised machine learning.

Maybe the selection of the variables was based on causal identification, and the model was constructed so that fertilizer use may be interpreted causally. Typical steps in causal inference.

The model was just copy-pasta from StackOverflow. The modeler's only assumption was that the job would be successful if the code ran without errors.

In all these different approaches, the final model could have been the (more or less) same linear regression model. All assumptions that were made during the modeling process have to be considered when using and interpreting the model.

A Book About Modeling Mindsets

Different tasks require different modeling mindsets. Ideally, a data scientist would be an expert in as many modeling mindsets as possible. But that would take ages, especially since some of the assumptions and “cultures” behind the mindsets are rather implicit. That’s why I wrote Modeling Mindsets, my second book with Interpretable Machine Learning being my first one.

I tried to keep Modeling Mindsets super short (~100 pages + compact format) but also to squeeze lots of intuition into it. The book covers these mindsets:

Statistical Modeling

Frequentist inference

Bayesian Inference

Likelihoodism

Causal Inference

Machine Learning

Supervised Learning

Unsupervised Learning

Reinforcement Learning

Deep Learning

The book will likely come out in December.

Sign up here to be notified when the book is released.