Time series forecasting with tabular foundation models

This post is a quick primer on using tabular foundation models for time series forecasting.

In my post titled I’m betting on tabular foundation models, I argued that TFMs will work with all kinds of supervised ML tasks because you can pre-train for any task for which you can construct a data generator. This includes the task of time series forecasting.

Besides specific pre-training, there is another path: Reframe the forecasting task as a regression task and throw a tabular foundation model at it.

This post is about the reframing + TFM approach. This approach is called TabPFN-TS in this paper for TabPFN, but you can replace TabPFN with other TFMs, such as TabICL or TabDPT.

The idea is simple: Take a time series, automatically enhance it with temporal features based on the timestamp, then one-shot it with a TFM regressor.

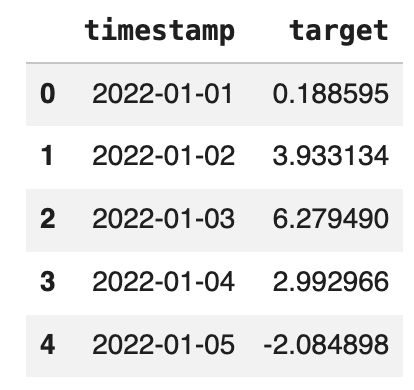

The input can be as simple as a target plus a timestamp:

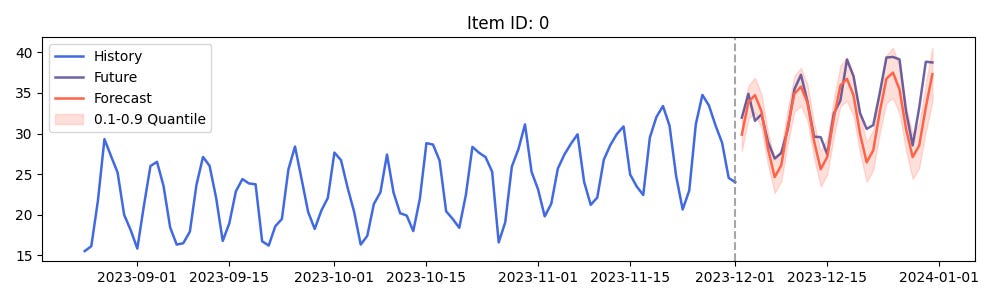

For this simple, univariate time series with equidistant time steps, we only need to provide the context data and the number of time steps to get a prediction, here with TabICL:

model = TabICLForecaster()

pred = model.predict_df(context_df, prediction_length=10)Time-based features are automatically added. These include an index that simply counts through the timestamps, cyclical (sin/cos) calendar features for day of year, day of week, and a few more features.

Instead of using a vanilla tabular foundation model, you can also use a specific time series foundation model, such as TiRex, Toto, or Moirai-2.0. Based on the paper, the time series foundation models outperform tabular foundation models on univariate time series tasks. However, the leaderboard flips when introducing other features: TabPFN-TS outperforms the time series-specific models for covariate-informed forecasts.

So what?

Let’s disentangle this:

Even if not pre-trained for forecasting, tabular foundation models like TabPFN work well as a backbone for time series forecasting.

I found it surprising that tabular foundation models outperformed time series foundation models in multivariate settings.

The wrapper that turns TabPFN into TabPFN-TS is not unique to tabular foundation models. At least to my understanding, it should also work with other “traditional” ML models.

However, providing such a wrapper for tabular foundation models is very on-brand, as it further pushes TFM down the path of becoming a batteries-included, does-a-lot-of-things-for-you-by-default type of model.

I don't know what the rest of your audience might be interested in, but for me it would great to have a future post on your own experience of how TFM models perform for time series forecasting versus existing models that you have trained for one of your projects.

Good Read in between all the noise of LLMs.